What DIVD found in your Mendix app

Reading time: ±5 minutes

1. A well-known problem on a larger scale

In February 2026, DIVD published a report on more than 2,000 Mendix applications that exposed sensitive data to the public internet. No sophisticated attack, no zero-day exploit, just a browser and a little curiosity. The only thing missing was a good configuration.

What is perhaps even more uncomfortable: this was not the first time. DIVD already conducted the same study in 2022. At the time, the organizations involved were notified, the vulnerable apps were adjusted, and the problem was solved. Until next time.

That raises a question that this blog tries to answer: if the problem is known, the solution is documented, and the tools are available, why does it keep coming back? The answer is not technical. It’s organizational. In many Mendix teams, security is treated as a final check, not as a feature of the development process. And you can skip a final check.

In this blog, we look at what exactly DIVD found, why these kinds of misconfigurations are so persistent, and, more importantly, how to set up security in such a way that you can confidently look forward to the next DIVD round from the basic question: are you in control?

2. Core of the problem

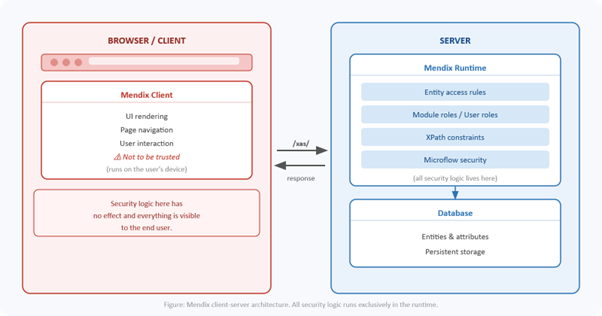

The Mendix security model is basically simple. A Mendix app consists of two layers: the Mendix Client, which runs in the user’s browser, and the Mendix Runtime Server, which is the service at the back end. The client communicates with the runtime via the /xas/ request handler, the Mendix Client API. Everything you see on the screen comes via that route.

The security logic is solely in the runtime. Entity access rules, module roles, user roles and XPath constraints determine what a user is allowed to request and see. Mendix is explicit about this: the client is by definition not to be trusted. Anything running on the user’s device is outside of the server’s control. That’s not a shortcoming of the platform; It is a conscious architectural choice, and a correct one. In fact, it is one of Mendix’s strengths over alternatives.

The problem arises when those server rules are not configured properly. Then the runtime obediently provides data where it shouldn’t. No exploit required, no special knowledge required; A regular /xas/ call is enough. It’s not a hack, it’s a “feature”.

In practice, DIVD found a number of recurring patterns. Anonymous users with read access to private entities. Module roles that are set too broadly. XPath constraints that are missing or incomplete. Microflows that quietly bypass access controls.

The consequences are concrete. Exposed names, address details, customer files, internal documents, sometimes identity documents. GDPR risk, risk of fraud and phishing, reputational damage. And the most insidious: abuse is hardly detectable. A /xas/ call from a malicious actor looks exactly the same in the logs as a call from a regular user.

The irony is that Mendix’s security model works exactly as it should. The problem is not in the platform, it’s in the process.

3. How to do it

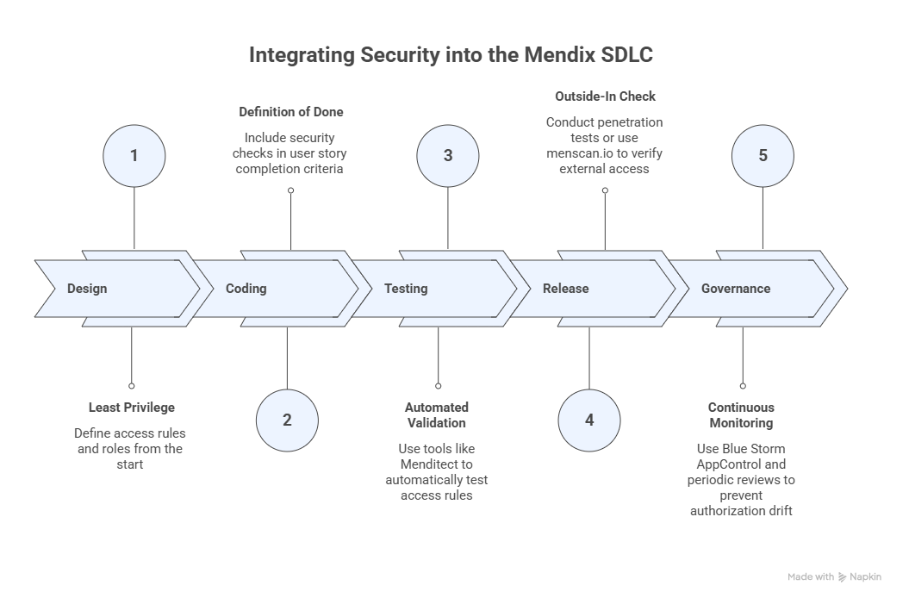

The good news is that this pattern can be broken, but not with a one-time fix. Security should be a feature of the entire development process, not a checkpoint at the end. The Software Development Life Cycle (SDLC) offers a natural framework for this. In each phase, choices can be made that structurally reduce the risk.

3.1 Design: least privilege as a starting point

The first choice is the most important. No access by default unless explicitly granted. Not the other way around. That sounds obvious, but in practice it is tempting to start broad during modeling and limit later. However, nothing is as permanent as a temporary solution. Least privilege means that with every new entity you ask the question: who is allowed to see this, and under what condition? That question belongs in the design conversation, not in the post-mortem.

For teams on Mendix 10.12 or higher, there is also Strict Mode. It limits what the client can request, regardless of the access rules, and thus acts as a safety net behind the configuration. Not a substitute for correct settings, but a meaningful second layer.

3.2 Coding: security as part of the Definition of Done

An entity without access rules is not a matter of half measures, it is an open door. By explicitly including security in the Definition of Done of user stories that require an adjustment in the domain model, it does not become a point of forgetfulness but a rounding criterion. No user story is ready without going through the access rules, module role mappings and XPath constraints. Combined with peer review, four eyes on every security-sensitive change, this becomes a structural brake on creeping misconfiguration.

3.3 Testing: automated validation

Access rules can also be tested automatically, for example via Menditect. This is optional, but it significantly reduces the gap between peer review and release check. A failing test for a missing access rule is always more pleasant than a notification from DIVD.

3.4 Release: de outside-in check

Before any production deployment, it’s worth approaching the app as an anonymous user; what’s visible from the outside? The most thorough way is a penetration test, in which a security specialist systematically tries to gain access to data that he should not see. For teams that can’t apply this for every release, menscan.io is a fast and accessible lighter variant. It uses the same Mendix Client API as the browser and simulates exactly what an attacker would see without credentials. It checks for anonymous access, demo users who are still active in production, and exposed metadata, among other things. Little time, a lot of preventive capacity.

3.5 Governance: continue feedback

A one-time check at release is not enough. Authorization drift occurs between releases: new modules, copied configurations, silent role changes. Blue Storm’s AppControl continuously monitors the entire Mendix portfolio. It scans both the project model and deployed apps, enforces centrally defined security policies through pipeline checks, and flags drift before it reaches production. Supplemented with a periodic access review (especially after team changes or major functional expansions), governance becomes a strong feature of the landscape.

Conclusion

The DIVD report is no reason to panic. It’s not a platform bug, there’s no urgent patch, and you don’t have to take your app offline. But it is a signal: a reminder that security does not automatically remain good when an application grows, a team changes or a deadline approaches.

The approach is not complicated. Least privilege as a starting point in the design, security as a fixed part of the Definition of Done, an outside-in check for each release and continuous governance over the portfolio. None of these measures are particularly severe; Together, they form a process of catching misconfigurations before they reach production. Trust in your team and the agreements made is a strong foundation, continuous governance is better.

Mendix gives you the right tools to build secure apps. What DIVD shows (again) is that having those tools is not the same as using them. The difference is not in the platform. It’s in the process.

Author: Stephan Wolbers – Head of Technology & Innovation MxBlue | SUPERP